Содержание

TL;DR: Built on sparse MoE architecture (10B active / 230B total), M2.5 is trained with RL across hundreds of thousands of real-world environments, delivering SOTA agentic coding, tool use, search, office productivity and a range of other economically valuable tasks. Meanwhile, it is engineered to reason efficiently and decompose tasks optimally, exhibiting tremendous speed in performing complicated agentic tasks without worrying about cost.

Now accessible on SiliconFlow at $0.2/M input | $1.0/M output, M2.5 makes frontier-grade agentic intelligence available at the scale that your agentic applications actually demand.

We're excited to bring MiniMax M2.5 to SiliconFlow, making one of the most cost-efficient frontier agentic models accessible to developers worldwide. Trained with reinforcement learning across hundreds of thousands of real-world environments, it delivers SOTA coding, tool use, search, and office productivity — while remaining economically viable for sustained and large-scale use.

Now, via SiliconFlow's API, you can access:

Budget-Friendly Pricing: MiniMax M2.5 at $0.2/M tokens (input) and $1.0/M tokens (output)

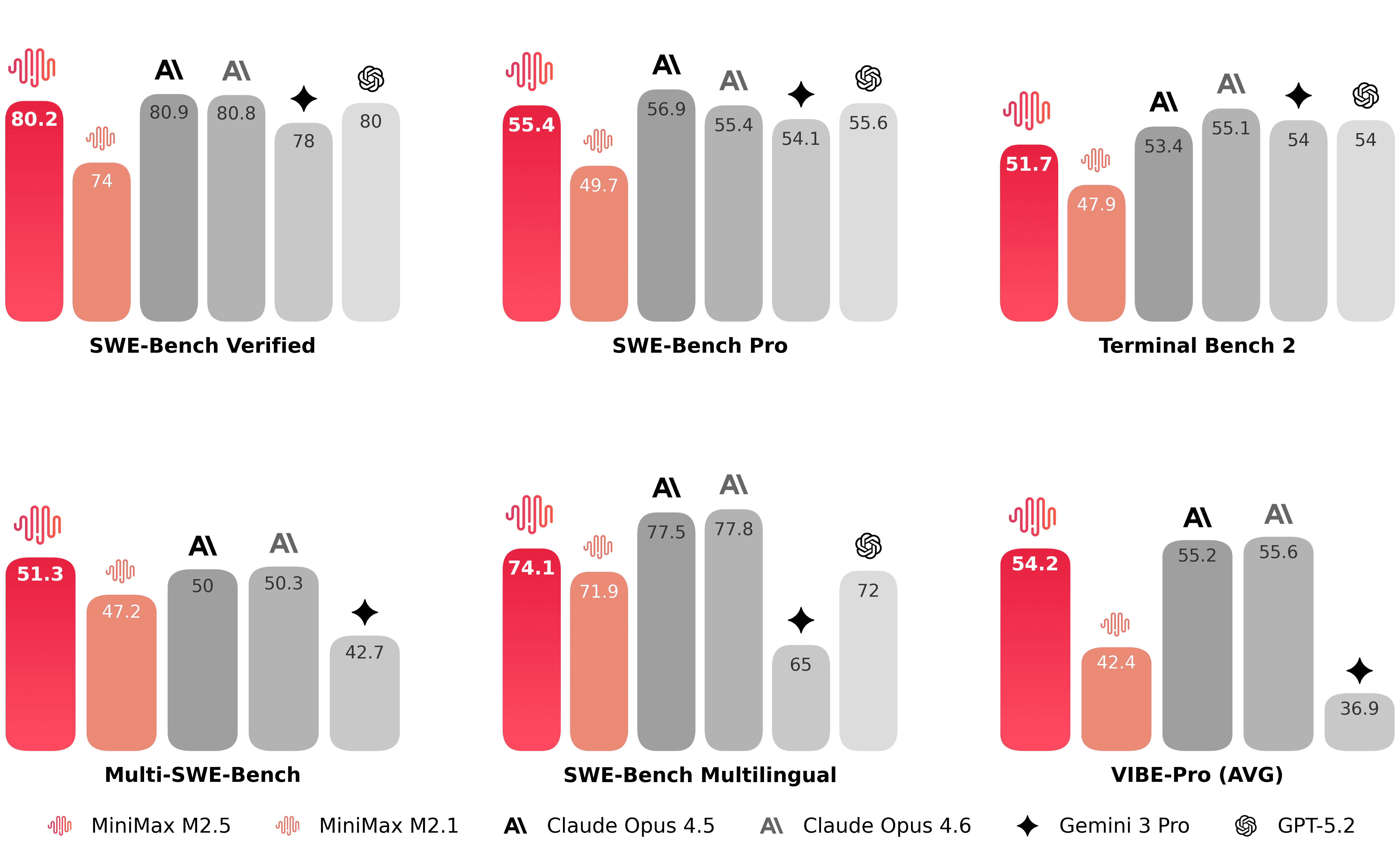

SOTA Coding & Agentic Performance: 80.2% SWE-Bench Verified, 51.3% Multi-SWE-Bench and 76.3% BrowseComp

Think and Plan Like an Architect: Before writing any code, M2.5 actively decomposes and plans the features, structure, and UI design of the project from the perspective of an experienced software architect.

Seamless Integration: Deploy instantly via SiliconFlow's OpenAI-compatible API, or seamlessly integrate with Claude Code, Kilo Code, Roo Code, OpenClaw and more.

Whether you're building coding agents that handle full-stack projects end-to-end, running deep research workflows that require multi-step search and reasoning, or generating professional-grade documents and financial models in office scenarios, SiliconFlow's MiniMax M2.5 API delivers the frontier intelligence you need.

What's New about M2.5

Since late October, MiniMax has successively released M2, M2.1, and now M2.5 — bringing significant improvements in:

Coding Like a Software Architect

A significant improvement from previous generations is M2.5's ability to think and plan like an architect. The Spec-writing tendency of the model emerged during training: before writing any code, M2.5 actively decomposes and plans the features, structure, and UI design of the project from the perspective of an experienced software architect.

Trained across 200,000+ real-world environments in 10+ languages (including Go, C, C++, TypeScript, Rust, Kotlin, Python, Java, JavaScript, PHP, Lua, Dart, and Ruby), M2.5 reaches SOTA levels in programming evaluations, with especially pronounced gains in multilingual coding tasks:

Beyond bug-fixing, M2.5 now covers the entire development lifecycle — from 0-to-1 system design to feature iteration, code review, and final system testing — across full-stack Web, Android, iOS, and Windows projects.

Search Smarter, Answer Better

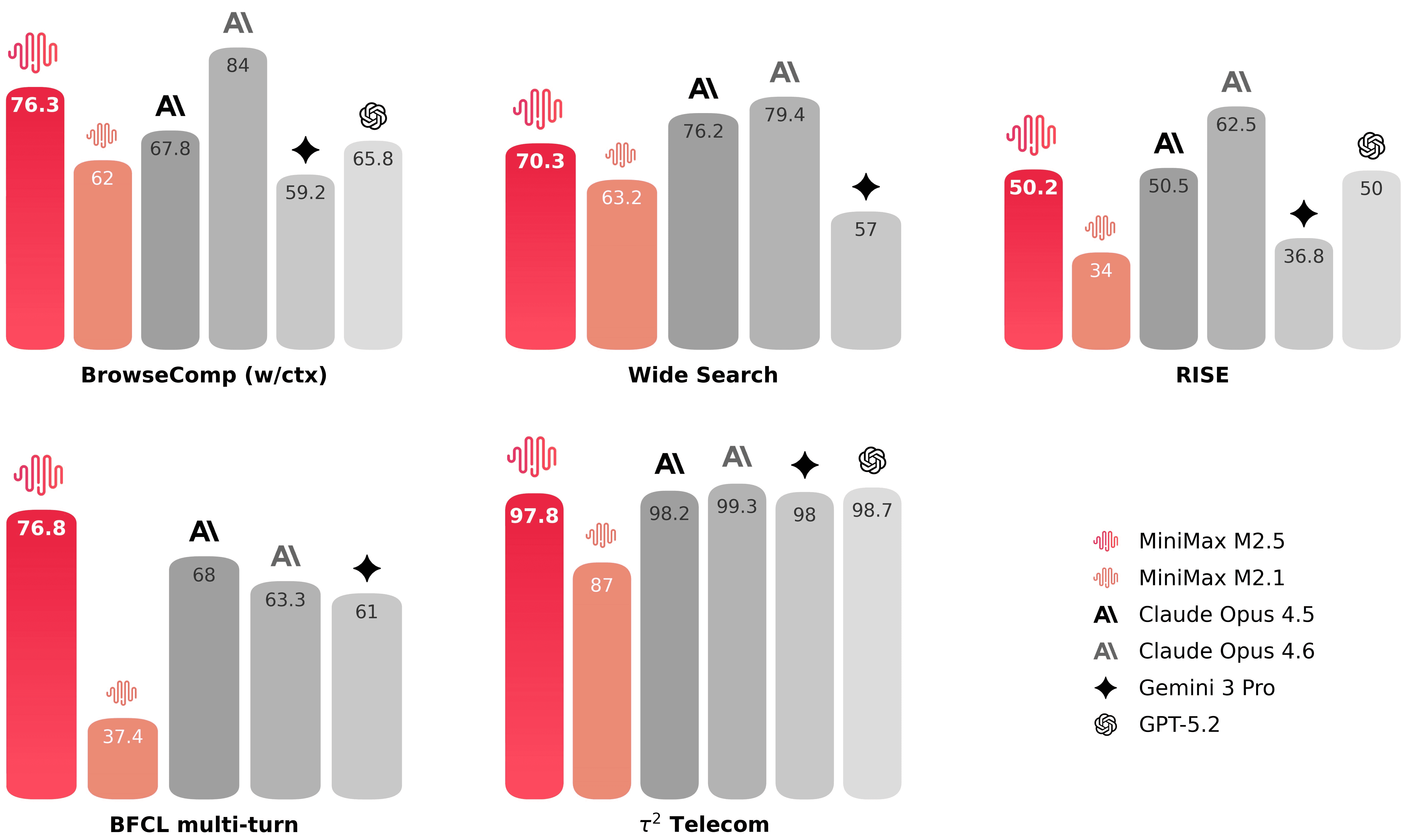

M2.5 is no longer just getting the answer right, but is also reasoning towards results in more efficient paths. Compared to M2.1, it completes agentic tasks using approximately 20% fewer reasoning rounds across BrowseComp, Wide Search, and RISE benchmarks, while improving outcome quality.

*RISE (Realistic Interactive Search Evaluation) is MiniMax's internal benchmark for measuring a model's search capabilities on real-world professional tasks. For example, its ability to navigate and extract insights from information-dense webpages.

From Chat to Deliverable Output

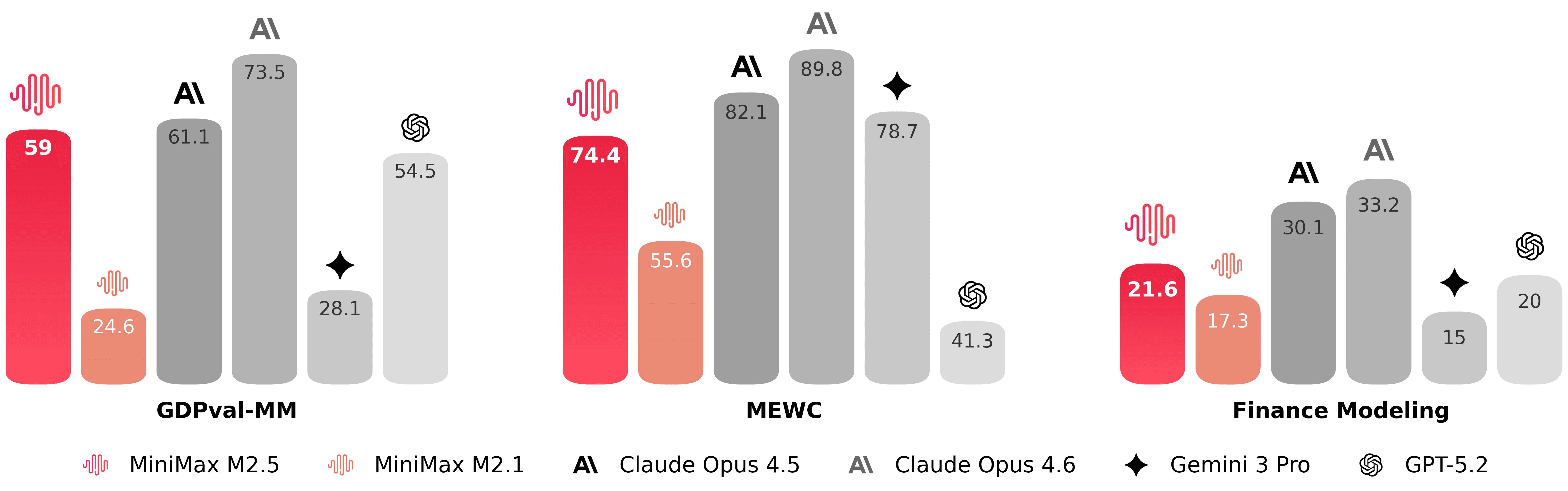

M2.5 is built to deliver truly usable outputs in real-world office workflows. To make that possible, MiniMax worked closely with senior professionals across finance, law, and the social sciences. These experts helped define task standards, design requirements, provide iterative feedback, and directly contribute to high-quality training data — embedding real industry tacit knowledge into the model.

As a result, M2.5 demonstrates strong performance in high-value workspace scenarios, including document drafting in Word, presentation structuring in PowerPoint, and financial modeling in Excel.

On the evaluation side, M2.5 has achieved an average win rate of 59.0% against other mainstream models:

*GDPval-MM, MiniMax's internal Cowork Agent evaluation framework, assesses both the quality of the deliverable and the professionalism of the agent's trajectory through pairwise comparisons, while also monitoring token costs across the entire workflow to estimate the model's real-world productivity gains.

How it Performs in Real World

Using MiniMax M2.5 through SiliconFlow's API, we tested the model on a simple office-scenario task: developing a task management tool. Before writing any code, M2.5 begins by acting like an architect — drafting a structured Markdown file to think through and plan the project, covering core feature summaries, target users, and UI/UX specifications. It then implements a complete Electron application: main process, IPC bridge, and UI layer with task CRUD, category/priority filtering, due date tracking, and a dark-themed interface with sidebar navigation, task cards, and modal forms.

Here's the prompt we used and the application it produced:

Prompt: Develop a task management tool for workplace use, enabling teams or individuals to organize work tasks, meeting schedules, project deadlines, and daily to-dos, with a clear and aesthetic UI dashboard.

M2.5 Configuration Tips

To get the best performance from MiniMax M2.5 on SiliconFlow, follow these recommended settings:

Default system prompt:

Get Started Immediately

Explore: Try MiniMax M2.5 in the SiliconFlow playground.

Integrate: Use our OpenAI-compatible API. Explore the full API specifications in the SiliconFlow API documentation.