목차

TL; DR: DeepSeek-V4 series is now available on SiliconFlow. This release introduces two powerful Mixture-of-Experts models with groundbreaking 1M-token context windows and hybrid attention architecture that reduces inference costs by 73%. DeepSeek-V4-Pro achieves a Codeforces rating of 3206 and 93.5% on LiveCodeBench, establishing itself as the best open-source model available today. Start building with SiliconFlow's API today to explore the Million-Token Context Intelligence.

Overview: Million-Token Context Intelligence

DeepSeek-V4 brings two strong Mixture-of-Experts (MoE) language models: DeepSeek-V4-Pro with 1.6T parameters (49B activated) and DeepSeek-V4-Flash with 284B parameters (13B activated), and both supporting a context length of one million tokens.

As a next-generation MoE model family, DeepSeek-V4 sets a new benchmark for unprecedented long-context efficiency, advanced reasoning capabilities, and state-of-the-art performance across coding, mathematics, and agentic tasks, enabling developers to achieve breakthrough results in complex AI applications with greater efficiency and reliability.

Try DeepSeek-V4-Pro & DeepSeek-V4-Flash on SiliconFlow

Cost-effective Pricing: DeepSeek-V4-Pro: $0.145 / $1.74 / $3.48 per 1M tokens; DeepSeek-V4-Flash: $0.028 / $0.14 / $0.28 per 1M tokens (Cached Input / Input / Output).

Seamless Integration: Instant compatibility with your existing development ecosystem: deploy via SiliconFlow's OpenAI-Compatible API through Cline, Gen-CLI, Kilo Code, Roo Code ; Anthropic-Compatible API with Claude Code; plug into agents like OpenClaw, Hermes Agent; ready-to-use in Dify, Janitor AI, Chub AI, ChatHub, Chatbox, Sider; and also available through OpenRouter.

Key Features & Innovations

DeepSeek-V4 brings revolutionary hybrid attention architecture and architectural innovations, achieving both massive context windows and dramatically reduced inference costs.

Two Model Variants: DeepSeek-V4-Pro (1.6T parameters, 49B activated) and DeepSeek-V4-Flash (284B parameters, 13B activated) for different performance-efficiency trade-offs.

Three Reasoning Modes: Non-think for fast responses, Think High for complex problem-solving, and Think Max for pushing reasoning boundaries.

Hybrid Attention Architecture: Combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA), requiring only 27% of inference FLOPs and 10% of KV cache compared to DeepSeek-V3.2.

Manifold-Constrained Hyper-Connections (mHC): Strengthens residual connections to enhance signal propagation stability across layers while preserving model expressivity.

Muon Optimizer: Enables faster convergence and greater training stability during the pre-training phase.

Trained on 32T: Comprehensive pre-training on diverse, high-quality data followed by domain-specific expert cultivation and unified model consolidation.

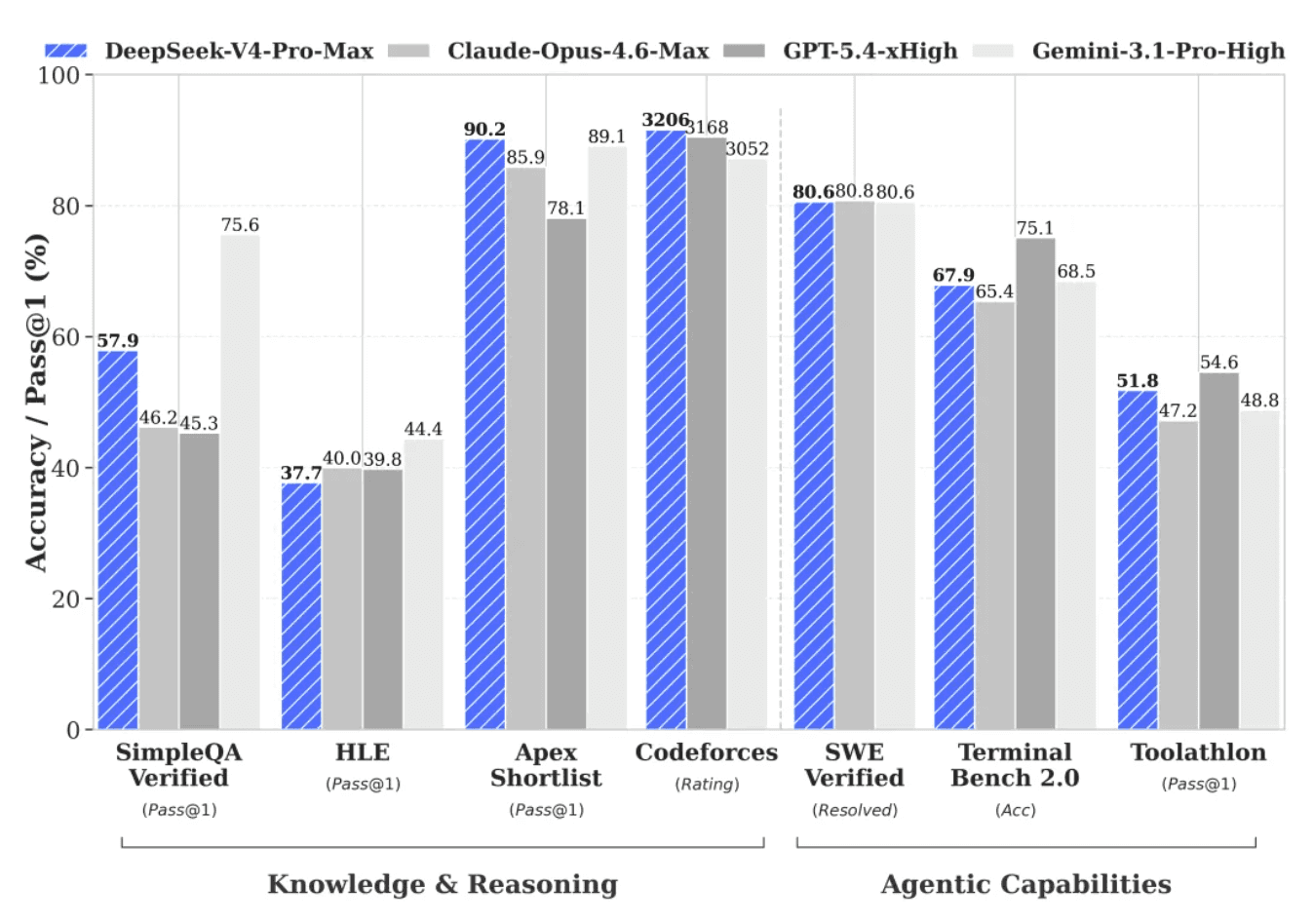

DeepSeek-V4-Pro-Max on Benchmarks

LiveCodeBench: 93.5% pass rate, outperforming leading closed-source models such as Opus 4.6 & Gemini 3.1 Pro.

Codeforces Rating: 3206, highest among all frontier models, establishing new state-of-the-art for open-source models.

SWE-Verified: 80.6%, matches Claude Opus 4.6 Max.

MMLU-Pro: 87.5% accuracy, demonstrating strong knowledge capabilities across diverse domains.

DeepSeek-V4-Pro-Max consistently outperforms previous open-source models and bridges the gap with leading closed-source models in reasoning, coding, mathematics, and agentic tasks.

Real-World Applications

Advanced Code Development: DeepSeek-V4 excels at code generation, debugging, and complex algorithmic problem-solving across multiple programming languages.

Long-Document Analysis: The 1M-token context window enables comprehensive analysis of entire codebases, legal documents, research papers, and technical documentation without truncation.

Agentic AI Systems: Ideal for building autonomous agents that can plan, reason, and execute complex multi-step tasks.

Get Started Immediately

Explore: Try DeepSeek-V4-Pro and DeepSeek-V4-Flash in the SiliconFlow playground.

Integrate: Use our OpenAI-compatible API. Explore the full API specifications in the SiliconFlow API documentation.

Join our Discord community now →