Table of Contents

TL; DR: The Qwen3.6 series—featuring the 27B dense model and 35B-A3B MoE architecture—is now available on SiliconFlow. Built on community feedback, Qwen3.6 delivers substantial upgrades in coding agents, agentic workflows, and multimodal understanding with native thinking capabilities. Benchmark results show the Qwen3.6-27B model achieving 77.2 on SWE-bench Verified, 53.5 on SWE-bench Pro, and 87.8 on GPQA Diamond; Qwen3.6-35B-A3B achieving 92.0 on RefCOCO and 50.8 on ODInW13, both matching or exceeding models several times its size. Start building with SiliconFlow's API today to build.

Through SiliconFlow's API, You Can Expect

Cost-effective Pricing: Qwen3.6-27B $0.30/M tokens (Input) / $3.20/M tokens (Output) ; Qwen3.6-35B-A3B $0.20/M tokens (Input) / $1.60 /M tokens (Output)

Seamless Integration: Instant compatibility with your existing development ecosystem: deploy via SiliconFlow's OpenAI-Compatible API through Cline, Gen-CLI, Kilo Code, Roo Code ; Anthropic-Compatible API with Claude Code; plug into agents like OpenClaw, Hermes Agent; ready-to-use in Dify, Janitor AI, Chub AI, ChatHub, Chatbox, Sider; and also available through OpenRouter.

Overview: Flagship-level Coding Capabilities

Qwen3.6, the latest evolution in Alibaba's open-source Qwen family, supporting both multimodal thinking and non-thinking modes, offers two powerful variants: Qwen3.6-27B and Qwen3.6-35B-A3B. The release marks a major leap in agentic coding performance across the board. Engineered for stability and production use, Qwen3.6 gives developers a smoother, faster, and much more productive coding workflow.

Qwen3.6 Highlights

Agentic Coding: Delivers smoother, more precise execution for frontend workflows and repository-level reasoning.

Thinking Preservation: Introduces reasoning context retention across chat history, streamlining iterative development and slashing overhead.

Qwen3.6 Key Features

Qwen3.6-27B (Fully Open-Source Dense Model , 27B)

Flagship agentic coding performance that surpasses Qwen3.5-397B-A17B.

Robust text and multimodal reasoning capabilities.

Qwen3.6-35B-A3B (Fully Open-Source MoE Model, 35B total / 3B active)

Exceptional agentic coding that goes toe-to-toe with much larger models.

Strong multimodal perception and reasoning ability.

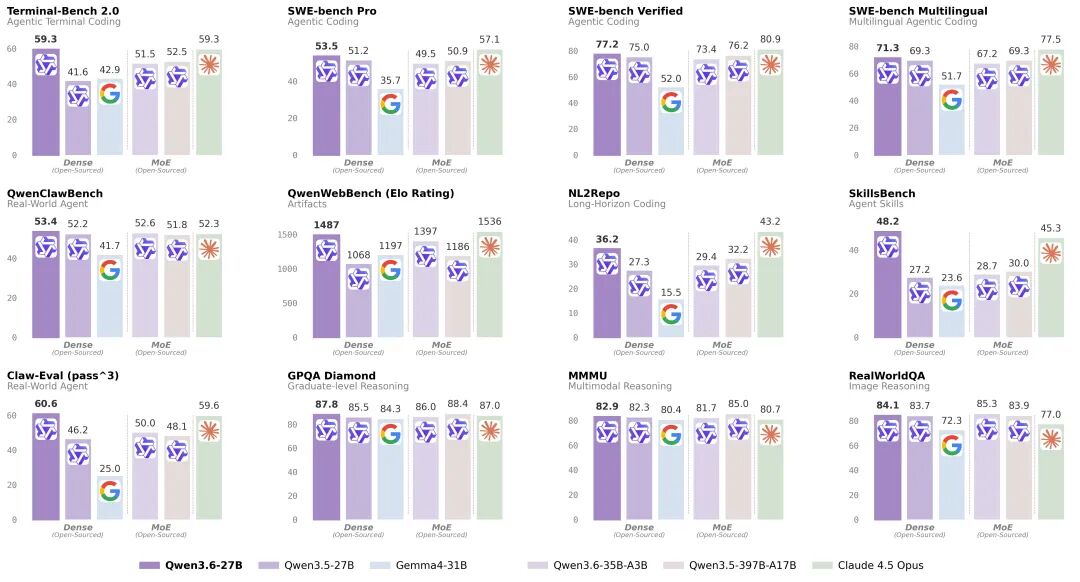

Qwen3.6-27B on Benchmarks

Qwen3.6-27B marks a major leap forward in agentic coding for dense models. With 27B parameters, it surpasses the much larger Qwen3.5-397B-A17B (397B total / 17B active) across the board on major coding evaluations. The margins speak for themselves: 77.2 vs. 76.2 on SWE-bench Verified, 53.5 vs. 50.9 on SWE-bench Pro, 59.3 vs. 52.5 on Terminal-Bench 2.0, and a particularly striking 48.2 vs. 30.0 on SkillsBench. Beyond outperforming its larger predecessor, it also significantly outpaces all peer-scale dense models. This efficiency extends to reasoning tasks as well, where Qwen3.6-27B scores 87.8 on GPQA Diamond, competitive with models several times its size.

Qwen3.6-27B is natively multimodal, mirroring the Qwen3.6-35B-A3B by consolidating both vision-language thinking and non-thinking modes into a single unified checkpoint. It seamlessly processes interleaved text, image, and video inputs, powering advanced multimodal reasoning, document understanding, and visual question answering.

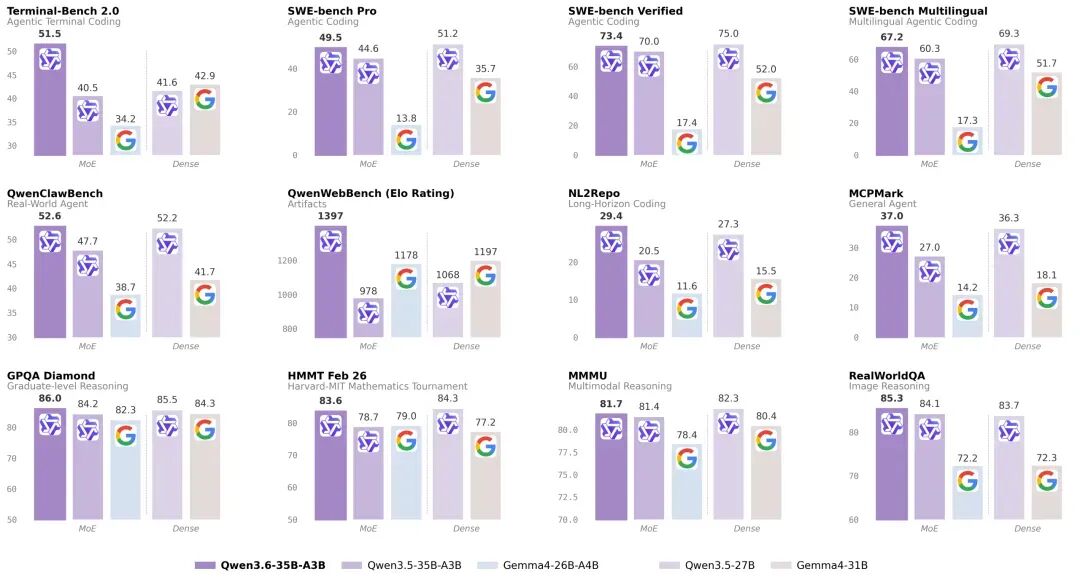

Qwen3.6-35B-A3 on Benchmarks

Activating just 3B parameters, Qwen3.6-35B-A3B outpaces the dense 27B Qwen3.5-27B across key coding benchmarks. Even more impressively, it eclipses its direct predecessor, Qwen3.5-35B-A3B, with dramatic gains in agentic coding and reasoning tasks.

Qwen3.6-35B-A3B delivers perception and multimodal reasoning capabilities that defy its compact scale. Across most vision-language benchmarks, it matches Claude Sonnet 4.5, even edging it out on several tasks. Its prowess is particularly pronounced in spatial intelligence, scoring 92.0 on RefCOCO and 50.8 on ODInW13.

Real-World Applications

Software Engineering Automation: Build AI agents that autonomously fix bugs, implement features, and navigate large codebases.

Multimodal Document Intelligence: Extract structured information from complex documents, charts, and diagrams with industry-leading OCR and document understanding capabilities.

Agentic Workflows & Tool Calling: Create sophisticated agents with MCP integration, multi-step reasoning, and tool orchestration for terminal automation, web browsing, and API integration.

Get Started Immediately

Explore: Try Qwen3.6-27B and Qwen3.6-35B-A3B in the SiliconFlow playground.

Integrate: Use our OpenAI-compatible API. Explore the full API specifications in the SiliconFlow API documentation.

Python Example for Qwen3.6-27B API Usage:

Join our Discord community now →